I’ve Seen This Transition Before

How moving from low-level systems to Windows in the 1990s explains what AI will do to software today

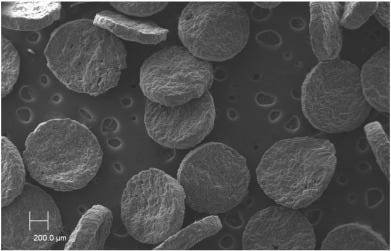

When I first started working, in the early 1990s, I joined a team building X-ray detectors for electron microscopes. These weren’t general-purpose machines. They were specialised scientific instruments where the X-rays emitted by a sample formed a chemical signature, allowing you to identify precisely what materials you were looking at under the microscope.

What mattered, though, was not just the physics. It was the software.

I joined at a moment of transition. The team had spent years working in a world where “software” meant everything. They wrote the graphics drivers. They wrote the operating system. They wrote low-level libraries. They worried constantly about memory limits, hardware quirks, and processor behaviour. When a new microprocessor or graphics chip appeared, the cycle was familiar: acquire the hardware, get someone who understood compiler compilers to build a compiler for their variant of C or Fortran, recompile everything, debug relentlessly, and only then add new functionality.

It was an impressive system, and the people I learned from were deeply skilled. They could reason about the entire stack in a way that is now genuinely rare. But most of their effort was spent just getting the machine to work at all.

I arrived just as they were migrating to Windows.

From the outside, that sounds banal. From the inside, it was transformative. Overnight, huge parts of the problem space simply disappeared. No more writing graphics drivers. No more home-grown windowing systems. No more worrying about entire classes of low-level issues that Windows absorbed and standardised.

What replaced that work was something else: focus.

Instead of asking “how do we get pixels on the screen efficiently?”, the question became “what does the customer actually need?” Instead of building infrastructure, we could build applications.

One of the first projects I managed was a bespoke gunshot residue analysis system. If you’re unfamiliar with it, the idea is that you can match the chemical signature found on a fired bullet with residues found on a suspect, establishing whether that person fired the weapon. Previously, this kind of analysis was slow, cumbersome, and limited to specialist labs. On Windows, we created a streamlined, purpose-built application that fit directly into forensic workflows.

Today, versions of these systems are used in police departments in cities around the world. At the time, that reach would have been unimaginable.

What also struck me was a subtle cultural shift. Some of the people I worked with didn’t quite think I was a “proper” software engineer. I had only once debugged code with an oscilloscope. I wasn’t fluent in the lowest levels of the stack in the way they were. I lived from Windows upwards.

They worried about everything. I worried about outcomes.

In retrospect, this wasn’t a loss of rigor. It was a change in where rigor applied.

I think about that period a lot when people talk about AI and software today.

Right now, we still frame programming as a syntactic activity. We argue about languages, frameworks, tooling, and style. We train people to think in terms of exact instructions, rigid structures, and mechanical correctness. That matters today because humans are still responsible for translating intent into executable form.

But AI changes that boundary.

The next generation of people building software will not worry about syntax in the way we do. They won’t need to internalise every rule of a language or remember every API shape. What they will need to worry about is something else entirely: what the system does, whether it does it correctly, whether it is secure, whether it behaves well under edge cases, and whether it actually serves the user.

This is not a lowering of standards. It is a relocation of responsibility.

The Windows transition didn’t make software simpler in total; it made it possible to spend complexity where it mattered. As a result, applications became more bespoke, more usable, and more tightly aligned with real-world problems. Entire categories of software only became viable because the platform removed the need to reinvent everything beneath them.

AI is poised to do the same.

There is a common claim that AI will eliminate software development jobs. I’ve heard this argument before, in different forms. The historical record doesn’t support it. There were far more software developers in the 1990s than there were in the 1970s or 1980s, precisely because writing software stopped requiring you to also write an operating system and graphics stack.

When the barrier drops, demand expands.

If you can only build applications by mastering the entire machine, software remains a niche. If you can build on a powerful abstraction layer, software spreads everywhere. That is what Windows did, and that is what AI will do.

Yes, future developers will not know many of the things I know. In the same way, I never knew many of the things the engineers who trained me knew. They could see deeper into the machine. I could see further into the problem space. Each generation gives something up and gains something else.

Crucially, we did not make worse software. We made better software because it was more aligned with human needs.

My view is that AI is an enabler, not a destroyer. It will allow us to build more software for more specific contexts with higher expectations of usability and correctness. It will shift the centre of gravity in software engineering away from mechanical translation toward judgment, design, and responsibility.

I’ve seen this transition before. It didn’t end with fewer builders. It ended with software everywhere.