The Most Interesting Consumer Surveys Are the Ones Humans Will Never Take

Or why the most useful questions are the ones nobody will answer

For decades, survey design has been dictated by the tolerance of the human respondent, not by the needs of the business. Every instrument is a compromise. Keep it short. Keep it simple. Keep it linear. Avoid repetition. Avoid complexity. Avoid anything that might frustrate or fatigue the panel.

Not because the business doesn’t need that information. But because humans won’t tolerate the instrument required to produce it.

This is one of research’s open secrets: the most valuable questions are often the ones we can’t ask.

AI changes that. Carefully.

Synthetic models, when trained privately on your own historical survey data, open up a new category of research: the impossible survey. The kind that no human would ever complete, but that contains structure, logic, and signals worth exploring. Not invented data. Not simulated respondents from nowhere. But grounded extensions of your actual data, moving through spaces that would otherwise remain closed.

But it’s only useful if you treat it like a model, not a shortcut to truth.

Synthetic doesn’t create answers; it extends patterns. It doesn’t discover what’s true; it makes visible what your data already implies. That’s the line. And it matters.

Once you stay on the right side of that line, impossible surveys become not just feasible, but also necessary. They become essential.

Every researcher knows the human constraints. Real respondents fatigue quickly. They satisfice. They abandon long surveys. They flatten nuance under cognitive strain. They misread complex structures. They won’t tolerate sequential trade-offs or high-redundancy tasks. So we adapt. We drop ideas. We trim concepts. We simplify grids. We avoid the extremes. These are not methodological preferences. They are coping strategies. The data gets smaller because humans demand it.

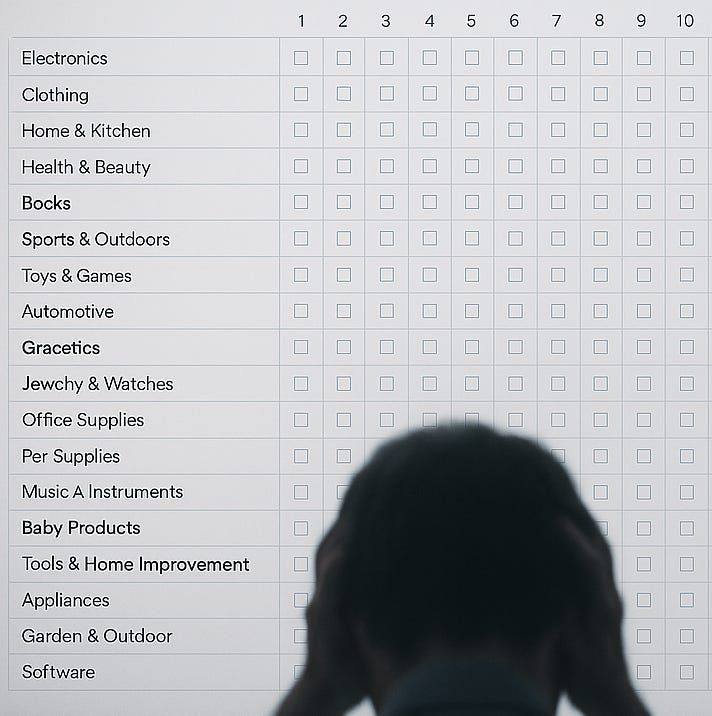

Synthetic changes the constraint. When trained on a clean, consistent, well-structured dataset, a private synthetic model can handle what no respondent ever would. One hundred attributes. Two hundred concept permutations. Thousands of pairwise trade-offs. It doesn’t complain. It doesn’t drift. It doesn’t get tired or lazy. It just runs the space.

This isn’t attitude generation. It’s a pattern extension. Which only works if the patterns are present to begin with. If your past data contains real signal: how preferences cluster, how features cohere, how trade-offs shift across subgroups, the model can carry those patterns into new designs. You’re not asking it to invent sentiment. You’re asking it to map a structure. And when the structure is there, it performs.

This becomes particularly powerful at the edge. Most good strategy happens at the edges, not in the averages. But edge-case exploration is a nightmare for survey design: too niche, too complex, too repetitive, too weird. Which is why most of it gets dropped before the field. With synthetic, you don’t need to skip those steps. You can run the fringe, identify where patterns hold or collapse, and take the promising paths into live validation. You still need humans, but you only need them for the parts that matter.

It also helps resolve one of the most persistent tensions in insight: the backlog of ambiguous questions that stakeholders never stop asking. What happens if we push the idea further? What if we combine these features? Where does this concept start to break? How sensitive is this position to context, pricing, or sentiment? These are real questions. But most teams can’t afford to answer them: not in timeline, not in sample, not in budget. So we guess.

Impossible surveys let you stop guessing. You can stress-test ideas before running a tracker. You can model logical coherence before re-fielding. You can run boundary conditions, contradiction checks, or feasibility passes without spending a single cent on sample. And because it’s your data, not someone else’s model, you can trust the structure being extended.

This doesn’t just save money. It avoids bad research. It cuts waste, eliminates dead ends, and gives researchers room to work. The result is tighter fieldwork, fewer errors, better decisions, and far less noise.

None of this replaces real respondents. It just ensures they’re used where they’re needed. You don’t need a human to rank 200 attributes. But you absolutely need them to surface emotion, interpret nuance, and validate signals in the wild. Synthetic can’t do culture. It can’t capture variance. It can’t respond to the moment. That’s why the loop matters. Synthetic expands the space. Humans choose what’s important. The interplay is where the value lives.

But none of this works without one critical rule: synthetic models can only explore what you’ve already learned. You cannot generate truth from nothing. The training data has to be strong. The patterns have to be real. The respondent base must be large enough to capture variation. The model has to be private. And the guardrails have to be clear.

When that’s true, impossible surveys become one of the most powerful tools available to researchers. Not to predict the future. But to explore the shape of it.

I’m not proposing you stop running real surveys with real people. I’m just suggesting that you make sure you ask enough questions in those surveys that you can then run impossible surveys with synthetic respondents trained on exactly the responses you just got.

The future of research isn’t just faster. It’s deeper. Traditional surveys reveal what people are willing to tell us. Impossible surveys reveal what people can’t: because the instrument was never tolerable to begin with.

And now, for the first time, we can run them. Not to replace the human layer. But to make it sharper, faster, and vastly more focused. The best questions were never unaskable. They were just impossible to answer. Until now.